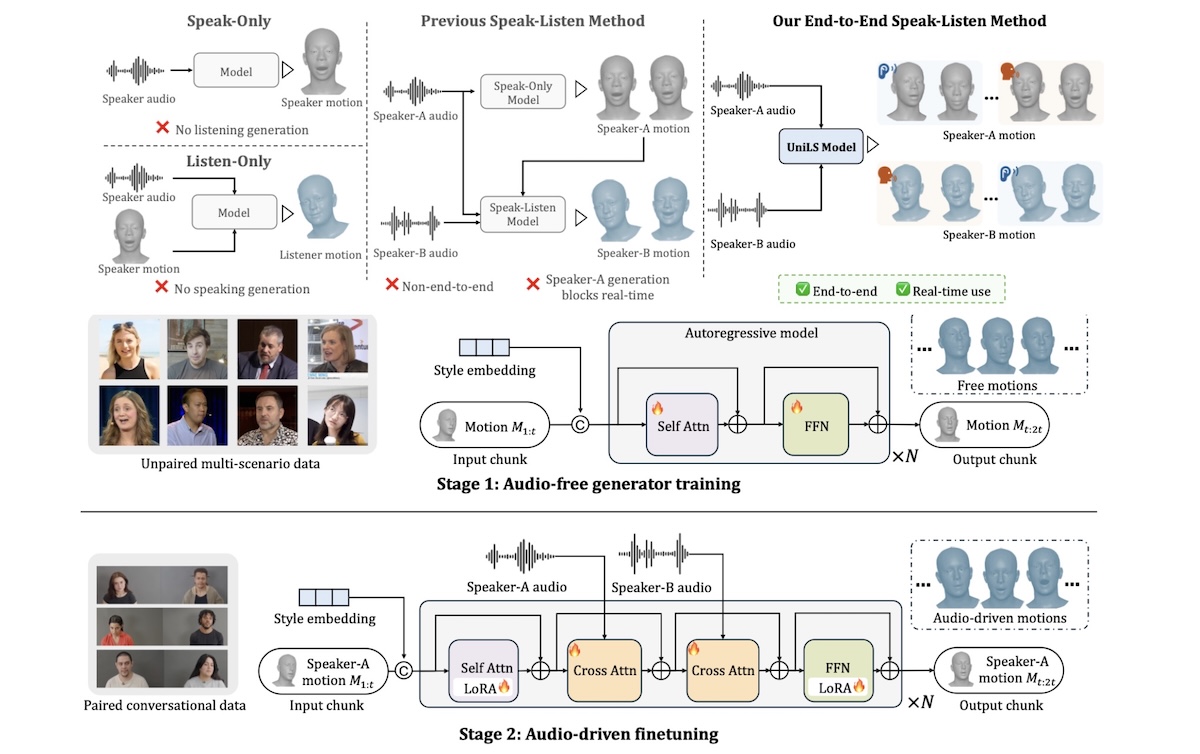

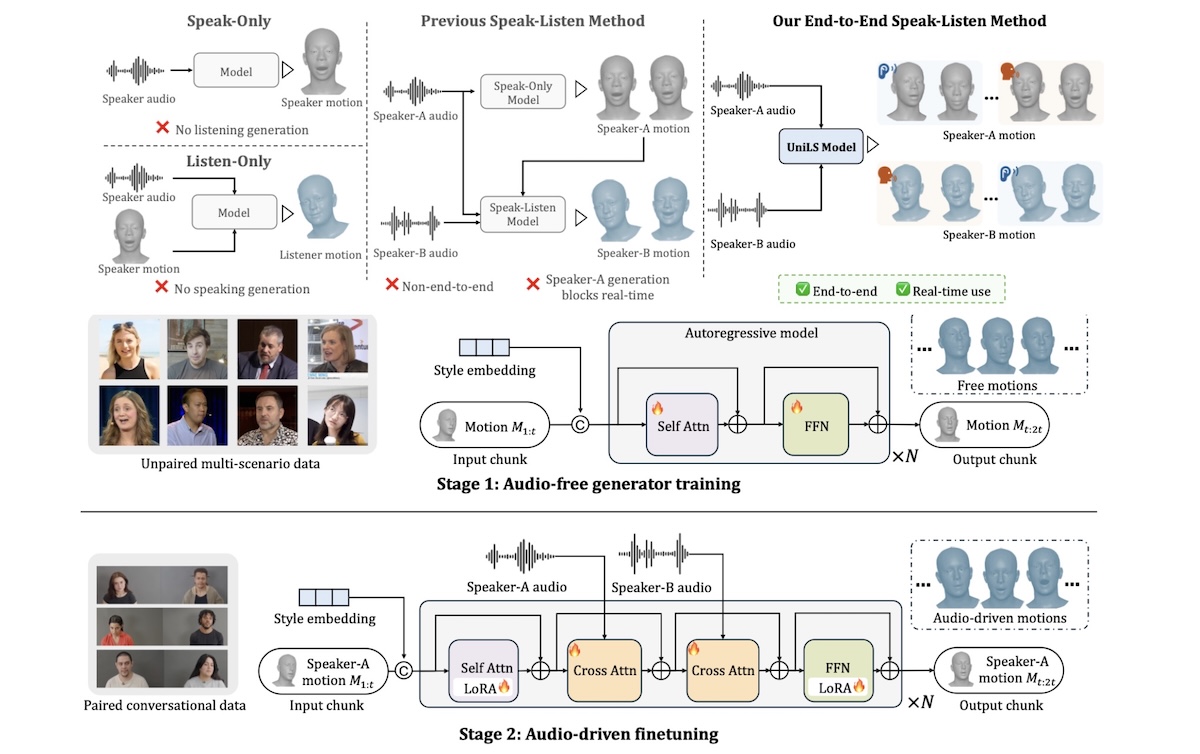

UniLS: End-to-End Audio-Driven Avatars for Unified Listening and Speaking

UniLS generates realistic, dual-track audio-driven expressions for both speakers and listeners.

I received my PhD from the University of Tokyo in 2026 under the guidance of Prof. Tatsuya Harada.

Prior to that, I received my Master from Peking University, where I was advised by Prof. Yasha Wang, and I completed my Bachelor at Tongji University.

For my full CV, please see here.

My research interests focus on 3D human-centric computer vision, specifically in human reconstruction and animation.

My research goals are to enable everyone to easily create digital avatars for games and virtual worlds, and to improve machine understanding of humans.

🎓 [2026.03] Officially a PhD!

✨ [2026.02] Two papers accepted to CVPR 2026!

📜 [2025.08] Starting my internship at Meta Reality Labs Pittsburgh!

📜 [2025.08] Two papers accepted to NeurIPS 2025!

📜 [2025.08] One paper accepted to SIGGRAPH Asia 2025!

📜 [2025.01] One paper accepted to CVPR 2025!

📜 [2024.09] One paper accepted to NeurIPS 2024!

📜 [2024.07] Starting my visiting studies at Princeton University!

📜 [2024.01] One paper accepted to ICLR 2024!

📜 [2023.04] Starting my PhD studies at the University of Tokyo!

📜 [2023.04] One paper accepted to ICCV 2023!

[2025.08 - 2026.01] Research Scientist Intern at Meta Reality Labs Pittsburgh.

[2025.05 - 2025.10] Student Researcher at Snap Research NYC (remote).

[2024.07 - 2024.09] Visiting Student Researcher at Princeton University, supervised by Prof. Jia Deng.

[2022.12 - 2023.11] Research Intern at International Digital Economy Academy (IDEA).

[2021.03 - 2022.10] Research Engineer at Tencent ARC Lab.

[2020.06 - 2021.02] Research Intern at Microsoft Research Asia.

[2019.01 - 2020.06] Research Intern at Megvii Technology, supervised by Dr. Xiangyu Zhang.

UniLS: End-to-End Audio-Driven Avatars for Unified Listening and Speaking

UniLS generates realistic, dual-track audio-driven expressions for both speakers and listeners.

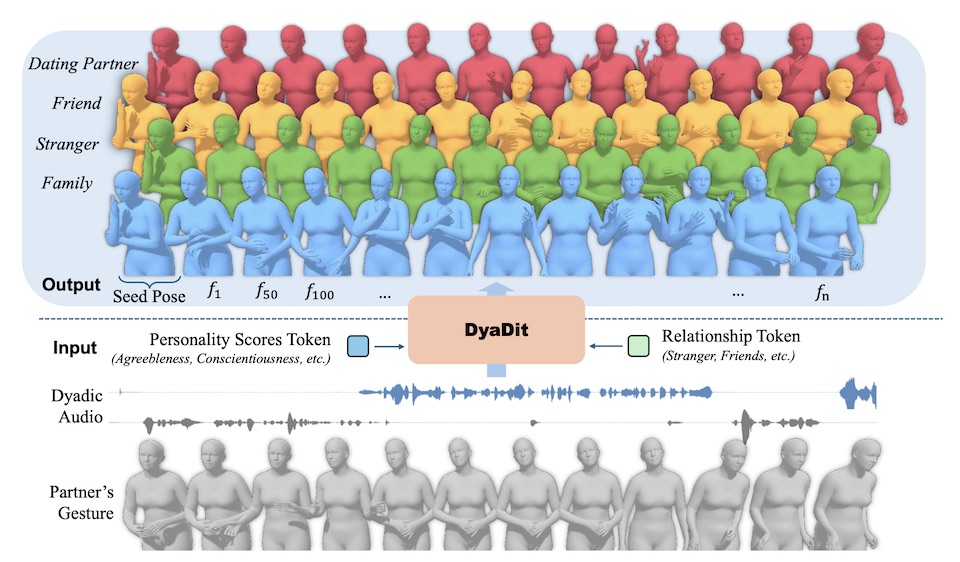

DyaDiT: A Multi-Modal Diffusion Transformer for Socially-Aware Dyadic Gesture Generation

DyaDiT generates digital human gestures by processing dyadic audio signals and social context, effectively capturing the mutual dynamics between two speakers.

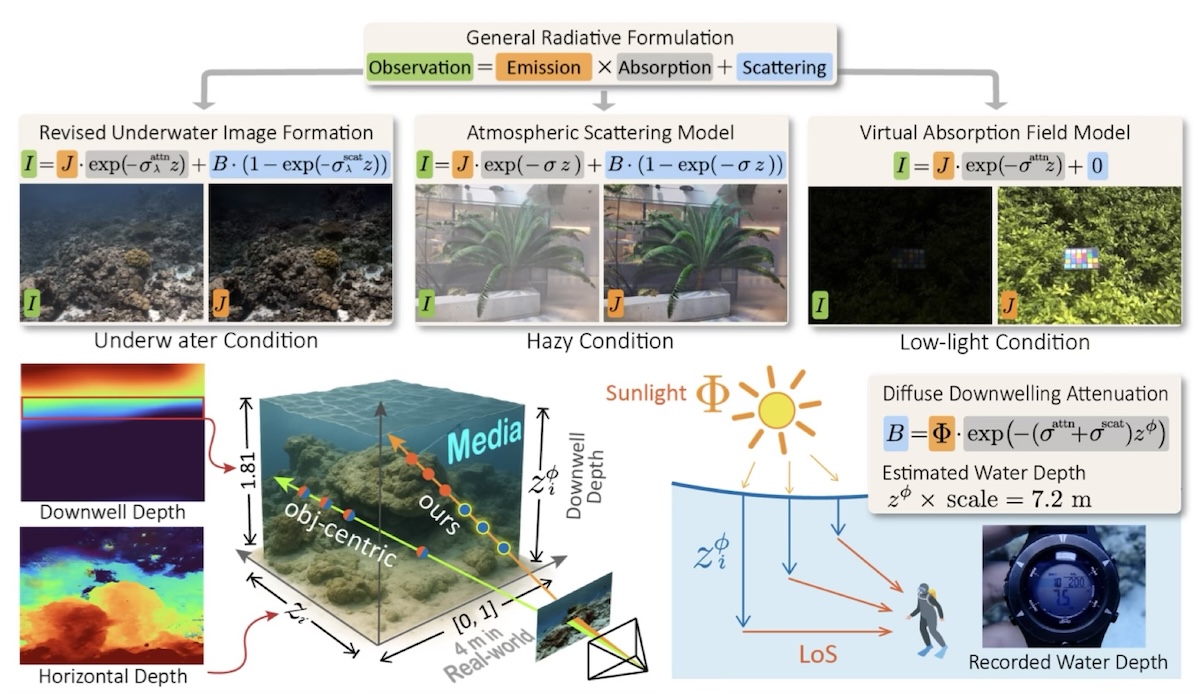

I2-NeRF: Learning Neural Radiance Fields Under Physically-Grounded Media Interactions

I2-NeRF is a novel neural radiance field framework that enhances isometric and isotropic metric perception under media degradation.

Intend to Move: A Dataset for Intention and Scene Aware Human Motion Prediction

Intend to Move is a new dataset for embodied AI, focusing on intention-aware long-term human motion in real-world environments.

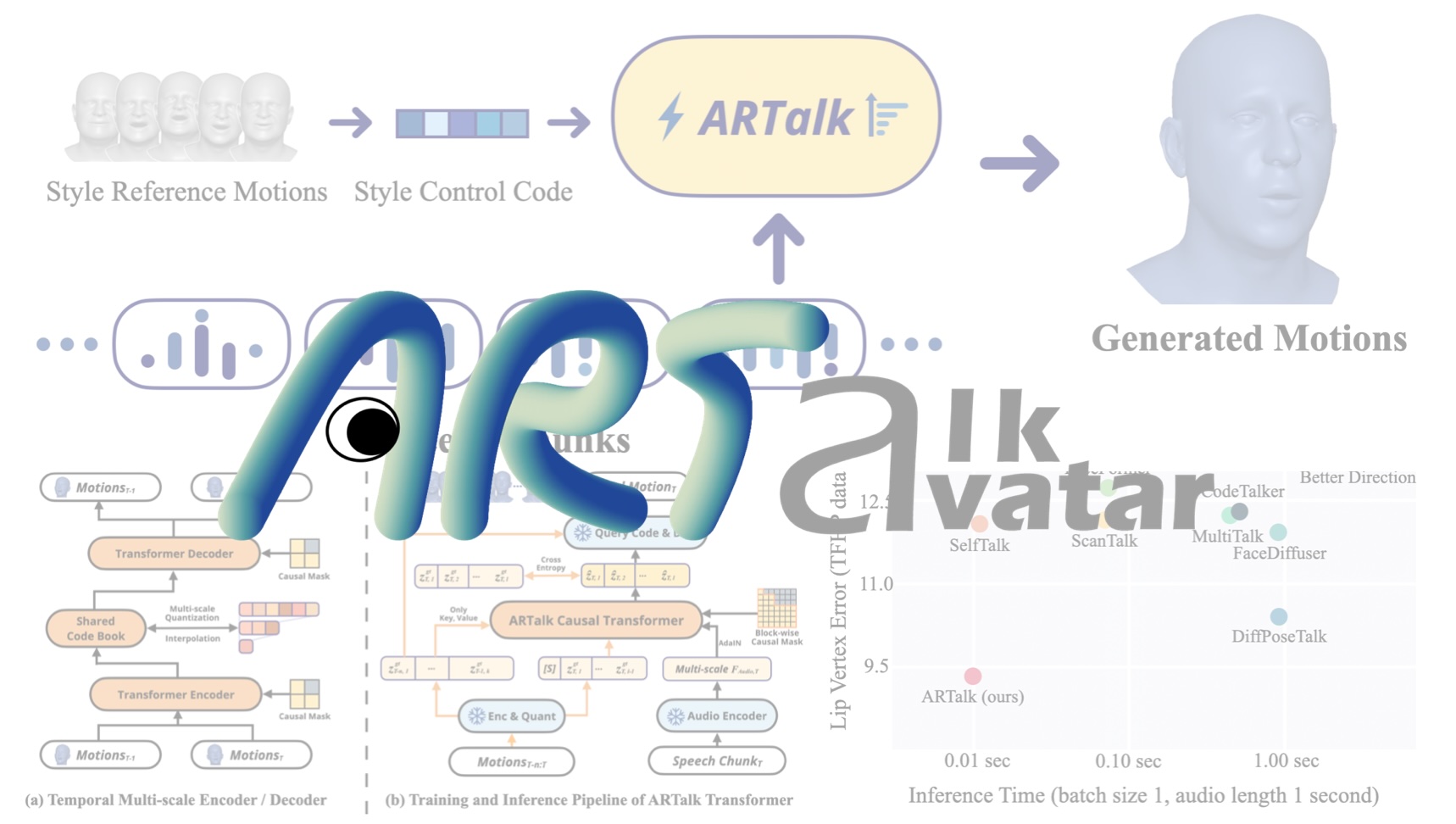

ARTalk: Speech-Driven 3D Head Animation via Autoregressive Model

ARTalk generates realistic 3D head motions (lip sync, blinking, expressions, head poses) from audio in real-time.

Luminance-GS: Adapting 3D Gaussian Splatting to Challenging Lighting Conditions with View-Adaptive Curve Adjustment

A simple multi-view curve adjustment method for novel view synthesis under challenging lighting conditions, including low-light, overexposure, and varying exposure.

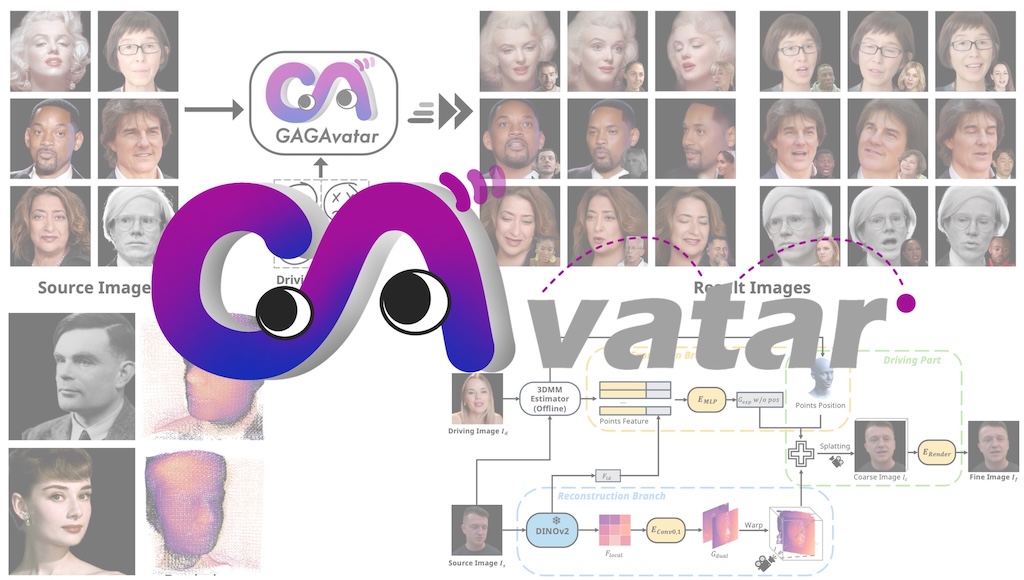

Generalizable and Animatable Gaussian Head Avatar

The first generalizable 3DGS framework for head avatars that can reconstruct realistic avatars from a single image and achieve real-time reenactment.

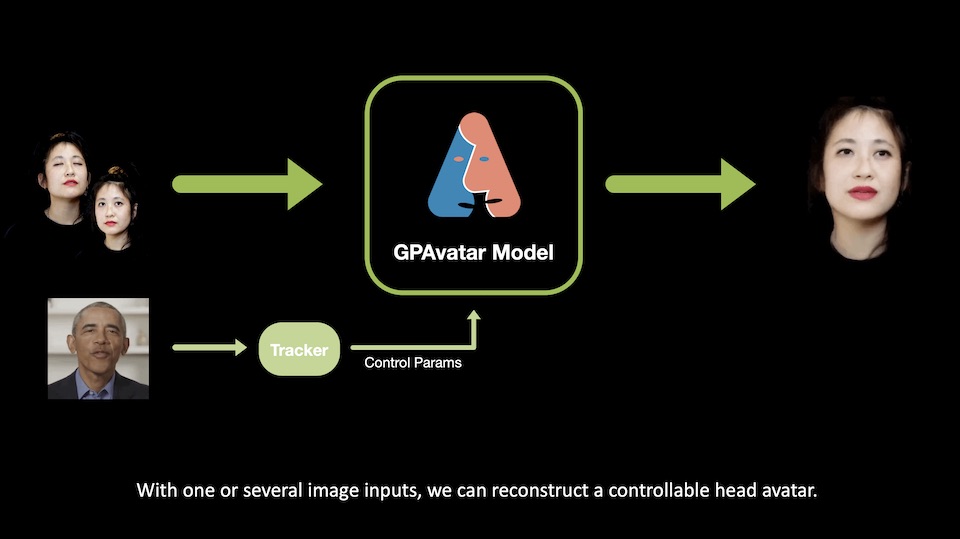

GPAvatar: Generalizable and Precise Head Avatar from Image(s)

A framework to reconstructs 3D head avatars from one or several images in a single forward pass.

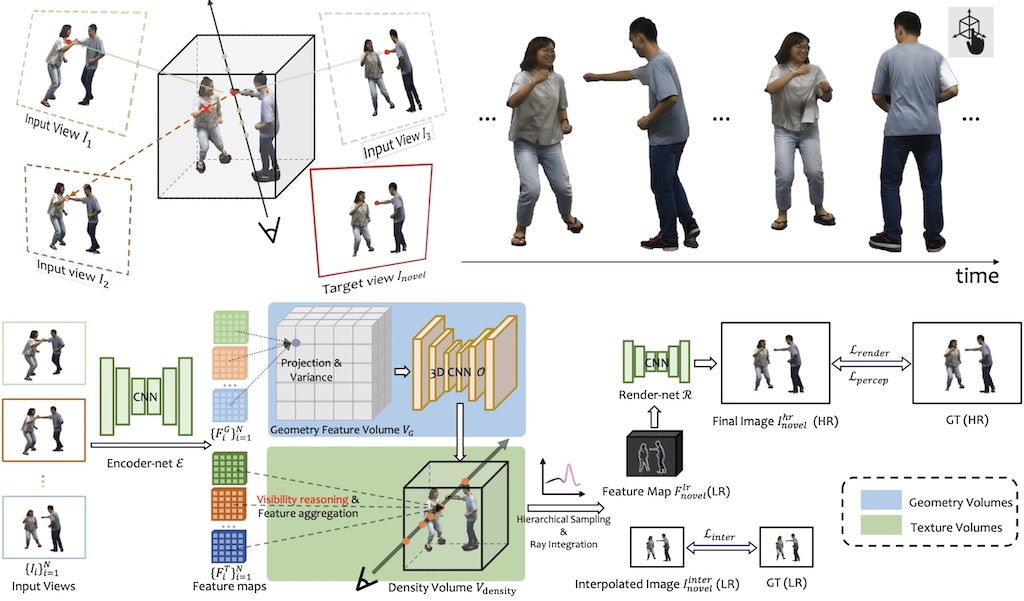

Real-time High-resolution View Synthesis of Complex Scenes with Explicit 3D Visibility Reasoning

A real-time high-resolution novel view synthesis method from sparse view inputs.

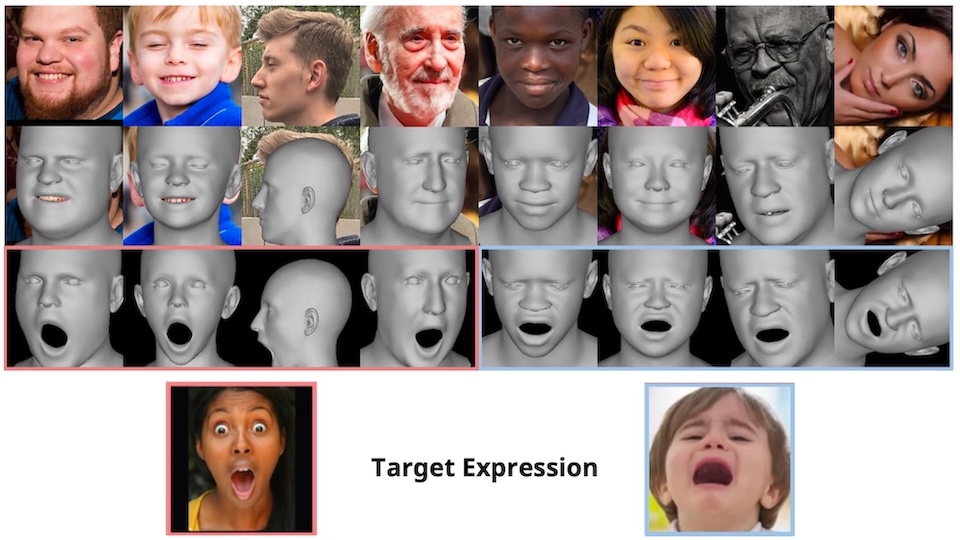

Accurate 3D Face Reconstruction with Facial Component Tokens

A framework for 3D face reconstruction from monocular images based on transformers.

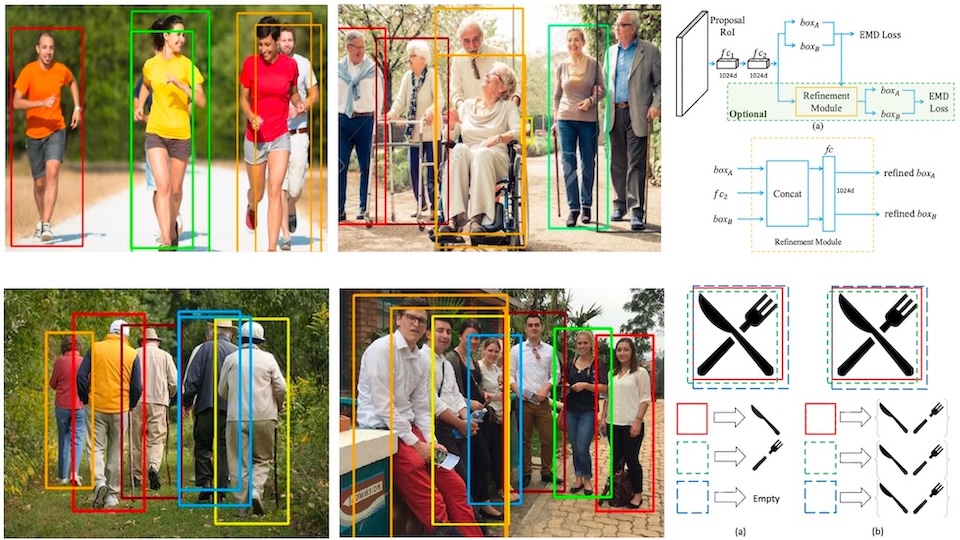

Detection in Crowded Scenes: One Proposal, Multiple Predictions

A nearly cost-free method to improve the detection performance in crowded scenes.